If you’re still defending SEO budget with rankings and organic traffic, you’ve probably…

Introduction

The uncomfortable truth about AI search is that you are no longer…

Healthcare SEO is the practice of optimizing medical websites, provider directories, and health-related…

Most content doesn’t fail because it’s bad — it fails because nobody optimized…

A practical walkthrough of how our SEO team at Relevance actually does keyword…

After years of running SEO programs at Relevance, three strategies consistently outperform everything…

Early in my career I promised a client I could tell them exactly…

If your team is publishing solid content, maintaining technical SEO and still not…

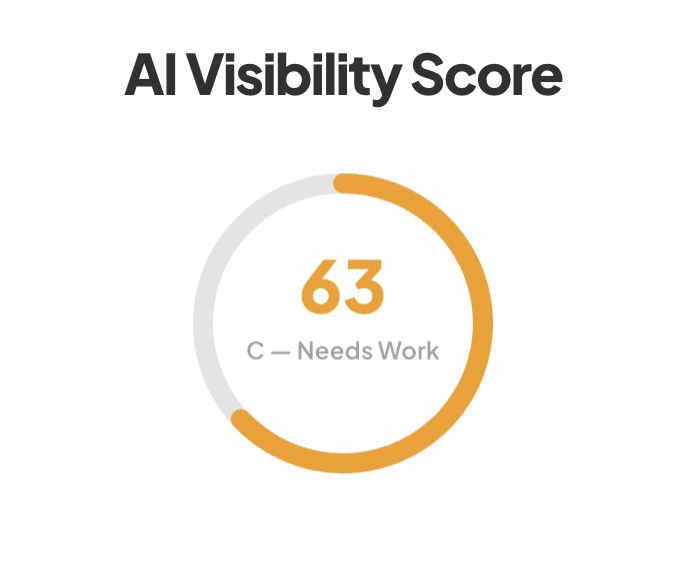

If you’re leading growth right now, this question doesn’t come up in a…

Our complete step-by-step keyword research process at Relevance — from defining topical territories…

If you’ve watched your organic traffic flatten while impressions hold steady, you’re not…

The three core search engine optimization techniques — technical SEO, on-page optimization, and…